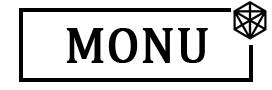

With the explosion of AI tools like ChatGPT, Gemini, and Claude, detecting AI-generated content has become a major concern in education, publishing, and research. Tools like Turnitin, GPTZero, and Originality.ai promise to identify AI-written text—but the real question is:

👉 Can we actually trust AI detection tools in 2026?

Let’s break it down with facts, research, and real-world insights.

🔍 The Short Answer: Partially Reliable, Not Perfect

AI detection tools in 2026 are:

- ✅ Useful for identifying clear AI-written text

- ❌ Not fully reliable for final judgment

Research shows that no tool consistently achieves 100% accuracy across all types of content.

📊 Real Accuracy Numbers (2026 Data)

Here’s what studies and testing reveal:

- Most tools achieve 80–85% accuracy at best

- Detection drops significantly for edited or mixed content

- False positives (human text flagged as AI) range from 3% to 12%

- Some tools perform as low as 60–70% accuracy in real academic settings

👉 Conclusion: These tools are helpful indicators, not proof.

🧠 Why AI Detection Tools Struggle

1. AI is Getting Smarter

Modern AI tools can:

- Mimic human tone

- Add variation in writing

- Avoid predictable patterns

This makes detection harder over time.

2. Edited Content Breaks Detection

Even small changes can reduce detection accuracy:

- Light paraphrasing drops accuracy by 15–30%

- Mixed (AI + human) content is hardest to detect

3. False Positives Are a Serious Issue

Sometimes tools flag real human writing as AI.

- Non-native English writers are more likely to be flagged

- Academic writing style often looks “AI-like”

👉 This creates fairness concerns in education.

4. Context Matters (But Tools Ignore It)

AI detectors analyze:

- Sentence patterns

- Predictability

- Writing structure

But they don’t understand intent or originality like humans do.

⚠️ Real-World Problems in 2026

- Students are being wrongly accused due to detection errors

- New AI models are becoming harder to detect

- Even detection tools sometimes misclassify real content as AI

👉 This shows the technology is still evolving and imperfect.

🧪 What AI Detection Tools Are Good At

They work best for:

- Fully AI-generated (unedited) content

- Large text samples

- Identifying patterns in simple writing

Some tools can reach 90%+ accuracy in these cases

❌ Where They Fail

AI detectors struggle with:

- Edited or paraphrased AI content

- Mixed human + AI writing

- Technical or academic writing

- Short text samples

In some cases, accuracy can drop below 50% for complex scenarios

🧠 Expert Insight: Why Detection is So Hard

Academic research shows that:

- AI detectors often rely on surface patterns, not true understanding

- They fail when writing style changes or context shifts

- Performance drops in real-world scenarios vs lab tests

👉 In simple terms:

They guess based on patterns—they don’t “know” for sure.

🎯 So, Should You Trust AI Detectors?

✔️ YES — Use Them As a Guide

- Helpful for identifying suspicious content

- Good for initial screening

❌ NO — Don’t Rely on Them Alone

- Not accurate enough for final decisions

- Should not be the only evidence

✅ Best Way to Use AI Detection Tools

Use them smartly:

- Combine with human review 👀

- Check writing style consistency

- Look for citations and originality

- Use as a support tool, not judge

🚀 Final Verdict (2026 Reality)

AI detection tools in 2026 are:

👉 Useful but not reliable enough to be trusted blindly

They are improving—but still face major challenges like:

- False positives

- Evasion by AI tools

- Difficulty detecting hybrid content

💡 Final Advice

Whether you’re a student, researcher, or content creator:

- Don’t depend only on AI tools

- Focus on original writing and clear understanding

- Use AI responsibly—as a helper, not a shortcut

AI detection is evolving… but human judgment is still the most reliable tool ✅